Technology has changed the way that police officers do just about everything. Not too long ago, officers didn’t even have computers at their stations, much less technology such as Facial Recognition.

This tech has now been deployed in agencies around the world but, as with many innovations, its uses have opened an ongoing debate regarding civil rights.

ACCurate Facial Recognition Approved by Americans

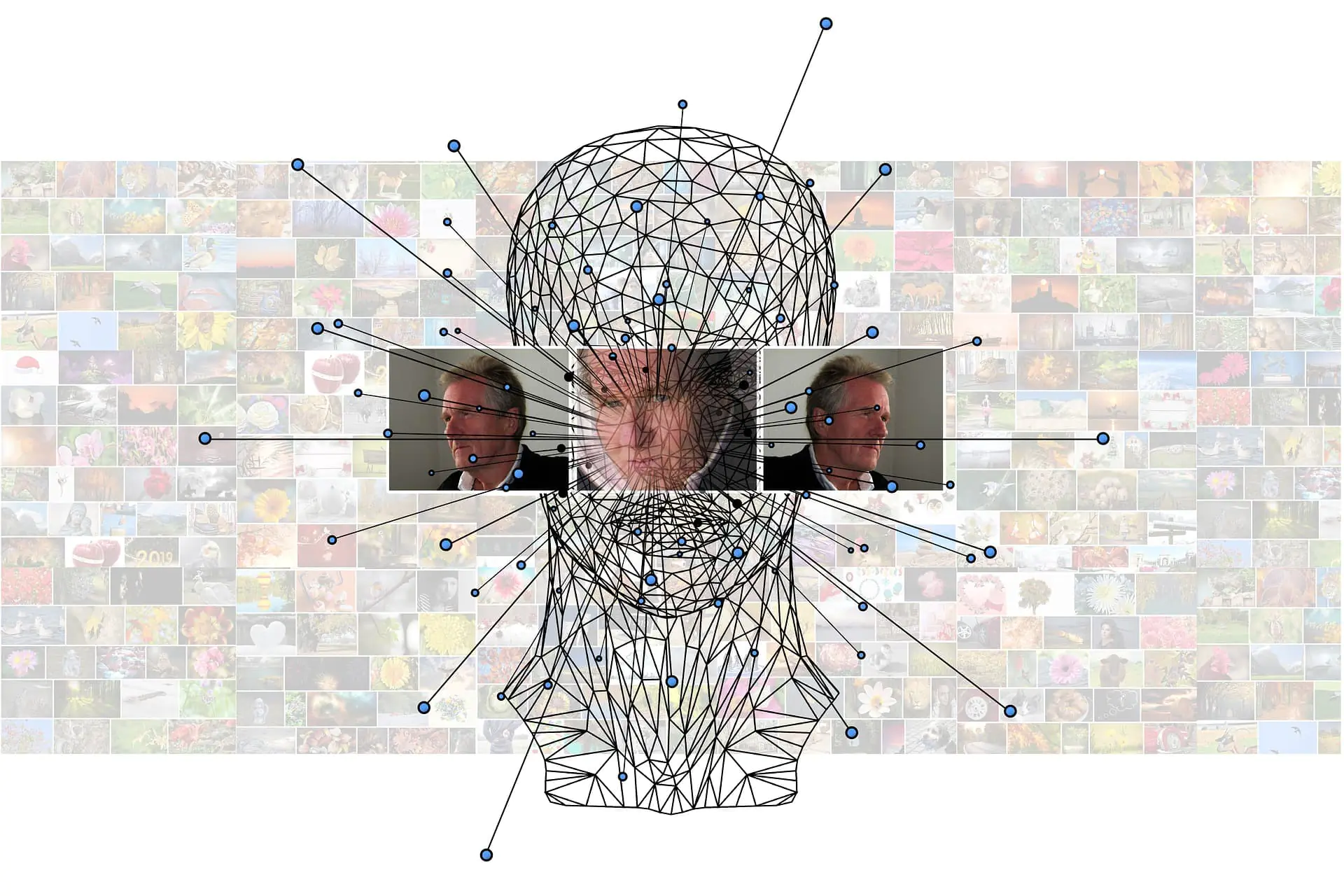

(Photo by NEC Corporation of America with Creative Commons license)

A recent survey conducted by Pew Research Center has found that Americans support the use of facial recognition by law enforcement, but not by tech advertising companies.

According to the poll, 56% said that they at least ‘somewhat’ trust police and officials to use these technologies responsibly. A similar share of the public, 59%, said that it’s acceptable for law enforcement to use facial recognition tools to assess security threats in public spaces (with an additional 15% not being sure).

Despite some examples in which the software has misidentified individual people, most Americans consider the tools to be relatively effective. Roughly three-quarters of U.S. adults, 73%, think facial recognition technologies are at least somewhat effective at accurately identifying individual people.

Americans are also more willing to embrace the tech if it works the way it’s supposed to. As found by the Center for Data Innovation, Americans agree with the use of facial recognition technology if the software is right 100% of the time compared to 39% who agreed with the technology if it’s right 80% of the time.

“The survey results suggest that one of the most important ways for police to gain public support for using facial recognition technology in their communities is to use the most accurate tools available,” said Daniel Castro, Director of the Center for Data Innovation.

The Facial Recognition Ban

In May, San Francisco banned city agencies such as police and sheriff’s departments from using facial recognition technologies. However, the ban didn’t affect cameras installed by businesses or individuals.

This is part of a broader package of rules, introduced in January by supervisor Aaron Peskin: Agencies also need to publicly declare the intended use of surveillance tech and gain approval from the board. In a recent update, California lawmakers temporarily banned state and local law enforcement from using facial-recognition software in body-worn cameras.

The technology has been used by law enforcement to spot fraud and identify suspects, but critics say that recent advances in AI have transformed the technology into a dangerous tool that enables real-time surveillance. Bernie Sanders is the first presidential candidate to call for a complete ban on the police use of facial recognition as part of his campaign’s broader plan for criminal justice reform. If elected president, Sanders specifically pledges to “ban the use of facial recognition software for policing.”

The Latest Use of Facial Recognition

Facial Recognition has revolutionized policing all around the world: It’s been used to find missing children and to spot criminals in a crowded place. But the facial recognition app by South Wales Police marks the latest deployment of this technology.

The department is running a test of the app with 50 officers, allowing them to identify potential suspects in real-time while patrolling, without the need to return to the station. How does it work? Officers run a snapshot of a person through a database of suspects called a watchlist and find potential matches even if the individual gives false or misleading information.

In most UK law enforcement use cases, the Automatic Facial Recognition software is provided by Japanese firm NEC, although the BBC has confirmed that the app’s interface has been designed in-house by South Wales Police.

“This new app means that, with a single photo, officers can easily and quickly answer the question of, ‘Are you the person we are looking for?’” said Deputy Chief Constable Richard Lewis in a press release. “I want to stress that our police officers will only be using the new technology in instances where it is both necessary and proportionate to do so and always with the end goal of keeping that particular individual, or the wider public, safe…We have given additional training to the officers who are part of the trial and will closely monitor the use of the app to assess its effectiveness,” he added.